Quick Docs

Human-AI Platform

for live support

Create your knowledgebase

from your website and documents.

Meet your AI AssistantsGPT4, Claud3.5, Mistral, Llama (LLMs).

Beautiful Customizable UI

Build your custom UI on top of the open source Nexai React UI Library.

Nexai AI chat assistant and AI Search are build upon ShadCN UI which offers beautiful customizable React UI Components with RadixUI.

import { ChatSidePanelShadowDom } from 'nexai.js/chat-side-panel'// customize with https://github.com/nexai-cloud/nexai.js// your public api key is available in your project settingsconst nexaiApiKey = String(process.env.NEXT_PUBLIC_NEXAI_API_KEY)// display the react side panel chat componentreturn () => <ChatSidePanelShadowDomnexaiApiKey={nexaiApiKey}/>

The Nexai UI Components are also available as HTML copy and paste

<!-- nexai ai chat side panel --><script src="https://nexai.site/ai/chat-side-panel.js"></script><script>// edit the configurations for your websiteNexaiChatSidePanel.render({// get your api-key in project settingsnexaiApiKey: 'api-key',})</script><!-- end nexai ai chat side panel --->/>

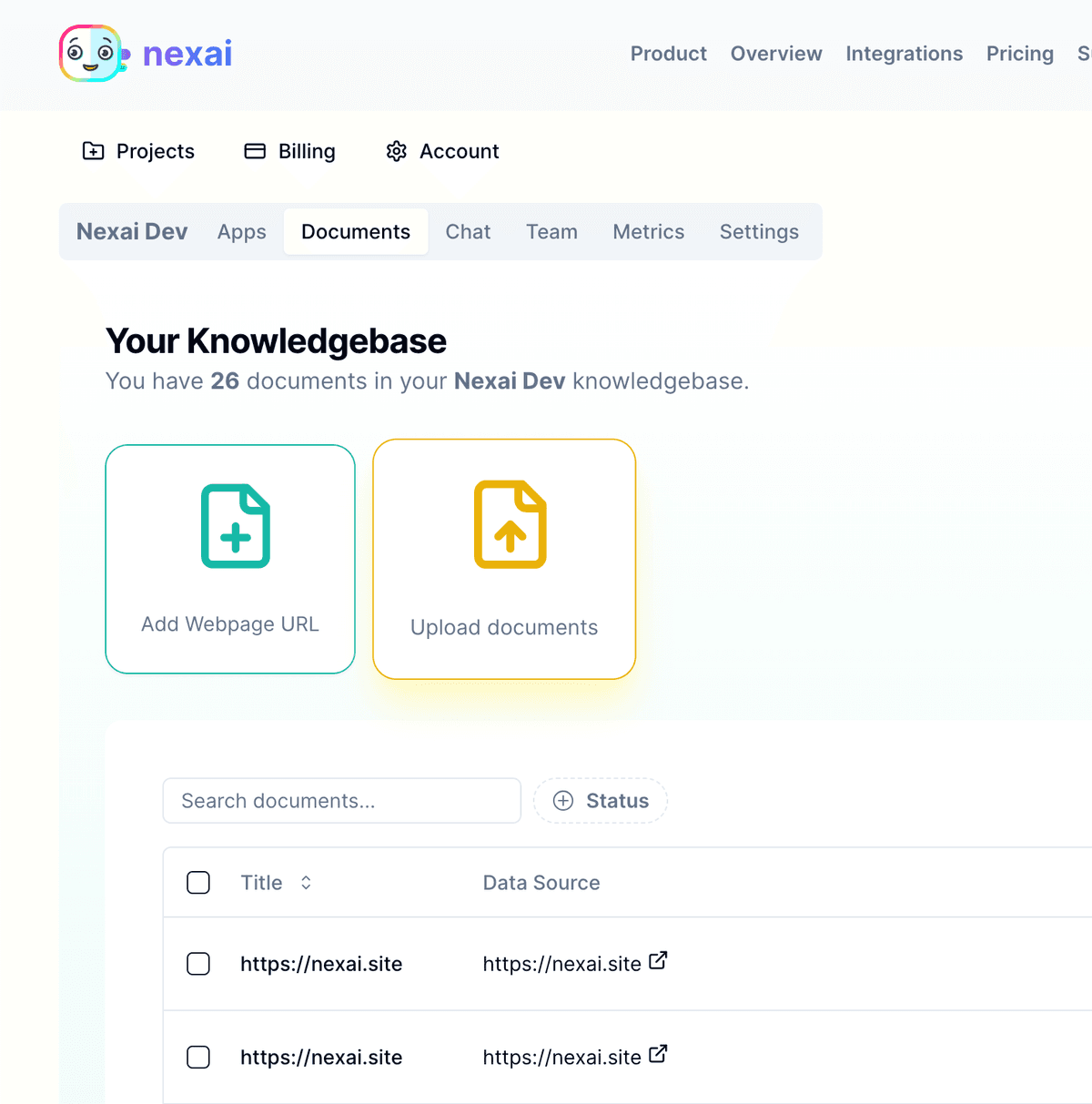

AI Knowledgebase

Add your website or upload documents to your AI Knowledgebase.

Nexai will link your documents to an AI predicted set of questions and answers, chat simulations and search phrases - which evolves with new queries, searches and chat resolutions.

The Knowledgebase can be used by your company to understand internal documents or for external customers to automate understanding your information.

The Knowledgebase service is exposed via a simple REST API and JS library used by the AI chat assistant and semantic search React Components.

Beta Integrations

Nexai supports any platform and has specific integration for the following platforms.

Copy and paste HTML code for HTML website chat embed.

JavaScript library to extend Nexai. Realtime search, Telegram bots or other AI applications

Typescript version or JS library with full type definitions

Integrated directly with NextJS or extensible for other platforms.

A react library for creating a support chat styled with tailwind, radix ui and shadcn

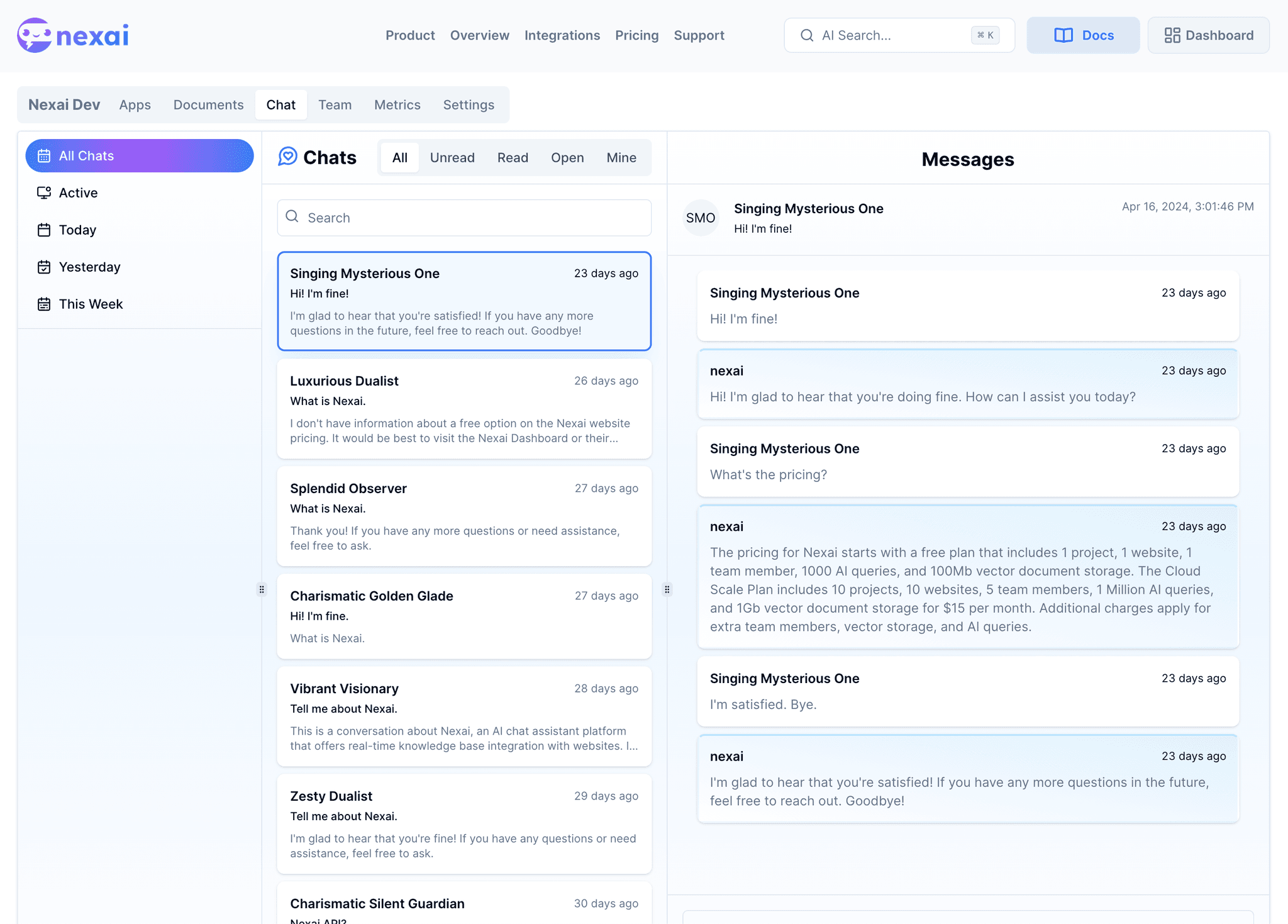

Hosted dashboard for managing projects, websites, teams, documents, apps and support chat assistance.

Add documents to index from Amazon S3 or other cloud providers.

Super fast chat responses from the API. A response is generated in under 1 second.

Multi device support for chat screen and admin panel.

Upcoming Integrations

Join our beta test group to be the first to get additional platforms support.

Integrated Telegram chatbot and chat button on your website.

Integrated Whatsapp chatbot and chat button on your website.

Integrated Discord chatbot and chat button on your website.

Fully integrated AI support chat for Wordpress.

Fully integrated AI support chat for Shopify.

Fully integrated AI support chat for Joomla.

Nexai is built to use without coding and extensible enough to build any AI application.

Scalable Pricing

Start free and scale up as you grow

Developer Plan

1 project1 website1 team memberAI Chat Assistant per visitorPersonal AI Chat Assistant per projectTeam Support Helpdesk1000 AI queries10 vector documents index100Mb storageGPT3.5, Mistral, Llama 7B includedAdd GPT4 with personal Subscription

Freealways

Beta Cloud Scale

10 projects10 websites5 team membersTeam Support HelpdeskAI Chat Assistant per visitorPersonal AI Chat Assistant per team member100K AI queries100 vector documents index1Gb storageGPT3.5, Mistral, Llama 7B includedGPT4 IncludedMistral Large, Llama 70B on request Cloud PerksSemantic Search IntegrationTelegram, Whatsapp botsDiscord, Slack integration Scale Up$5 per +1 team member$10 per +100 documents$10 per +1Gb storage$100 per +1Million AI queries

$20$5/month

* Nexai AI queries include queries to GPT3.5 Turbo, GPT4, Meta Llama, Mistral Large and Nexai AI, Nexai Semantic Search.

* AI queries are created by AI Chat, AI Search, knowledgebase indexing, chat simulations, question/answer and search phrase generation.

Compare Subscriptions -> Dashboard -> Billing

Loved by Users

Nexai API

Go further and create your own apps using the AI enabled API?

Nexai.js

const resp = await nexai.add('https://nexai.site').chat('How do I install nexai?')

AI Response

{chat: "Nexai can be installed via `npm install nexai.js`",sources: ["https://nexai.site/docs/get-started/","https://nexai.site/docs/js-library/"]}

About Nexai

We needed state of the art AI Chat Support with a fully customizable UI that can be integrated into any website or app.

We also needed a full AI powered API to build our own applications on top of the realtime content we deliver.

None of the existing solutions exposed the underlying AI as well as the UI to developers. Our WebApp UI choice was React with RadixUI but we also wanted to use the underlying AI for Mobile and other platforms.

We decided build upon the latest AI toolkits to connect to the latest state-of-the-art AIs to build our own microservice to suit our needs.

We started from the base and tied it to an API so we developers had full control over the AI they preferred, the choice of vector database, type of embeddings and data to index. All this with sane defaults to allow the simplest usage as well.

Our testing and research showed that AI requests are expensive and slow. About 1s queries for the large LLMs. We were used to 50ms response times with APIs.

We created a few strategies to deliver 50ms response times. We realized the large AIs could create accurate predicted responses as well as predicted semantic equivalents. This allowed us to build on device search with flexsearch queries predicted by AI. Most queries never call the API, they are processed in the browser or other device!

We then used semantic caches for the API. Each API call for an AI query goes through a low latency semantic search -> a fast LLM -> to a Large LLM such as GPT or Mistral. This pipeline provided 50ms responses 95% of the time after the semantic cache was fully built for a knowledgebase.

After building and testing our AI pipeline we decided to open it for other developers to ease their development. Hello Nexai!